Trending

Opinion: How will Project 2025 impact game developers?

The Heritage Foundation's manifesto for the possible next administration could do great harm to many, including large portions of the game development community.

Featured Blog | This community-written post highlights the best of what the game industry has to offer. Read more like it on the Game Developer Blogs or learn how to Submit Your Own Blog Post

This is an overview of all the elements which contribute to the dynamic damage system created for Radio Viscera.

Introduction

This is an overview of all the elements which contribute to the dynamic damage system created for Radio Viscera.

I've written this article with a focus on the concepts used rather than a specific language or environment or as a step-by-step guide. The implementation described here was built very specifically for the game I was making, so including the technical minutiae here did not seem helpful. Hopefully some of this information will help you in the development of your own project using whichever engine or environment suits you best.

I've tried to insert as many gifs, screenshots and sounds as I could to keep it from being too dry. The last few sections focus on the (less technical) secondary systems and effects that interact with the destruction and should be a bit more digestible.

This design was built in a custom C++ based game engine which uses an OpenGL renderer and the Bullet Physics SDK.

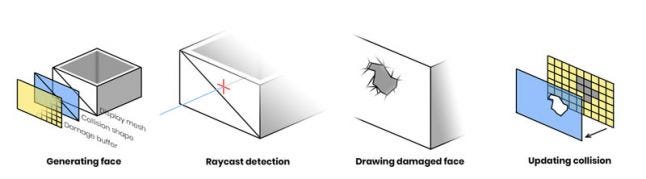

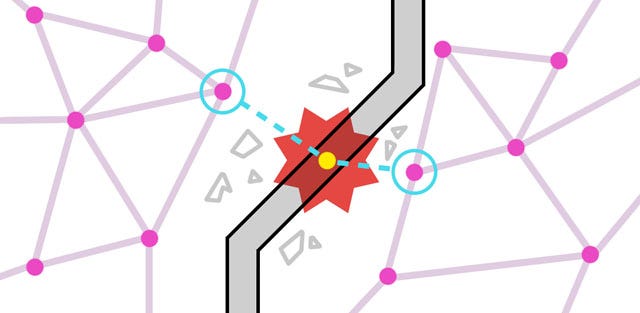

Figure 1. The central concepts behind the system

The destruction system depends on three elements that work together to present the illusion that a hole is being smashed in a wall:

Parametric geometry

Used to render the damaged walls.

Physics simulation

Controls the collision shapes which make the wall solid, performs raycast queries and helps generate triangulated collision shapes when the wall is damaged.

Render textures

Stores damage data for each wall, which is fed into the geometry shader and also used as the source for generating collision shapes.

Each destructible face is built of three pieces. The first piece is the display mesh which is a parametrically generated plane with position, normal and UV coordinate data. This is used to render the face in-game.

The second piece is the collision shape. This is a static collideable rigid body which is added to the dynamics world of the physics simulation. The shape itself is an identical match for the display mesh and is positioned to sit perfectly over top. In addition to providing the collision that you would expect from a solid wall it also detects damaging raycast queries (see Detecting raycasts).

Figure 2. Initial un-damaged face components (display mesh, collision shape, damage buffer)

The third piece is the damage buffer. This is a 2D render texture used to store the damage state for the face. The dimensions of this texture are calculated to match the size of the face in world space multiplied by a "pixels-per-meter" factor which determines the resolution at which the damage will be stored. In my case I use 48 pixels-per-meter so a wall 4 x 2 meters in worldspace would have a 192 x 96px sized damage buffer. This texture is cleared to RGBA [0,0,0,0].

Until the wall is first damaged these three pieces do not change. The simple planar display mesh allows me to use a standard shader and render the wall like any other entity in the scene until the damage shader is required.

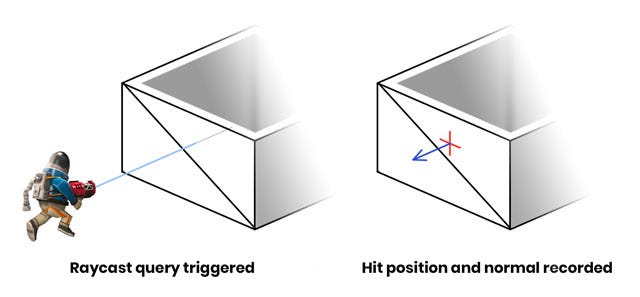

Damage is applied using raycasts. When a weapon is fired a raycast test is performed against all collision bodies in the dynamics world, with the ray originating at the weapon muzzle and extending toward the current aim direction. If the raycast intersects with the collision shape of a damageable face then a hit is registered. The world position and normal direction of the intersection are recorded and forwarded to the damageable face entity so it can record the hit to its damage buffer.

Figure 3. Surface normal of the hit is used for directional effects

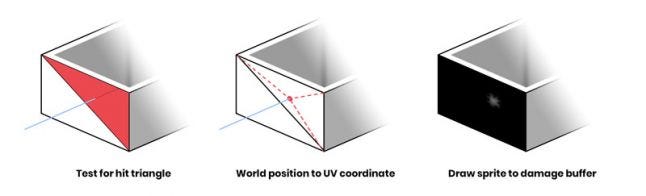

To actually apply damage a sprite needs to be drawn to the damage buffer. A black pixel on the damage buffer indicates no damage to that area and a fully white pixel indicates complete destruction of the area. Before the sprite can be drawn I need to calculate where to actually draw onto the damage buffer so it will match the hit location in world space. Barycentric coordinates will be used for this process so I first need to figure out which of the two triangles (that constitute the un-damaged plane collision shape) received the hit.

Figure 4. Steps required to draw the damage onto the buffer

Note: the barycentric method is a leftover from when the system was designed to work with arbitrary triangle meshes. If you're only dealing with flat, rectangular faces this conversion could be simplified.

Finding the correct triangle is done with a ray-triangle intersection test, using the data from the raycast hit. Since there are only two triangles this happens quickly. The destruction world position is then transformed into face-relative local space by multiplying it against the inverse transform of the wall entity. The distances between the hit position and the triangle vertices are calculated and used to generate a UV coordinate which aligns with the damage position on the wall.

![]()

Figure 5. An enlarged and brightened version of the damage sprite

The last step here is to draw the damage sprite to the damage buffer render texture like a stamp. The design of the sprite itself is the result of lots of trial and error to see what kind of shape worked well and had the intended effect (both visually and physically). The rotation of the sprite is randomized and the scale and brightness of the sprite are based on the magnitude of the damage. This allows more powerful hits to make larger holes. The sprite is drawn with additive blending so that successive damage sprites can "build" on each other if applied in the same area.

Figure 6. Mesh wireframe after tessellation. No damage has been applied.

When an untouched face is damaged for the first time a few things happen. First the display mesh, which up until this point was a two triangle plane, is tessellated. Similar to how the resolution of the damage buffer is calculated, there is a mesh density factor which describes "faces-per-meter". I use a value of 6, so my 4 x 2m wall results in a mesh that measures 24 x 12 square sections (576 triangles). The blue channel of the vertex color is used as a boolean value to mark which vertices are on the outermost edges of the plane mesh. This allows me to extrude those vertices in the geometry shader to create the wall thickness you see along the top edge.

Once damage has been applied, the face is flagged and starts to draw using a special damage shader. This shader samples from the damage buffer and uses a geometry stage to cull any faces that are fully damaged and construct a solid tapered edge at the borderline of the damaged area.

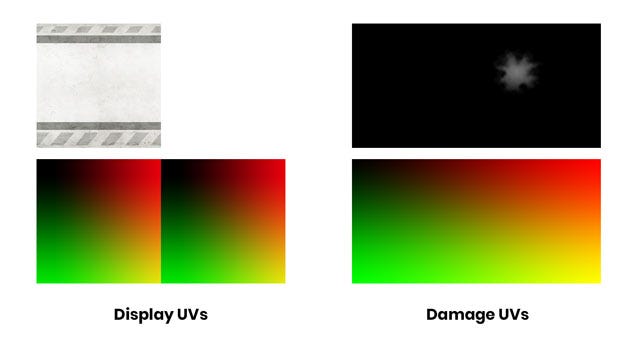

Figure 7. Visual representation of the two UV sets (lower) with the textures they sample (upper)

The wall geometry needs to have tiling UVs so the square wall display texture can repeat on long sections without stretching. However, the damage buffer requires unique UVs that map 1-to-1 with the wall geometry so a second set of UVs are generated and stored in the red and green channels of the vertex color. This way each vertex of the wall is mapped to a unique space on the damage buffer.

This next bit is the part where I try to describe a shader using words. If you prefer to just read the pseudocode, scroll down a bit further.

In the geometry shader each triangle of the mesh is processed independently. The UVs from the three vertices of each triangle are sampled from the damage buffer to determine if any of them should be fully discarded. If all three vertices are damaged beyond the threshold value then the entire triangle is discarded and the shader returns.

If none of the triangle vertices have any damage then the triangle should just be drawn normally. These triangles actually need to be drawn twice: once regularly and then again extruded inwards with the normal and winding order inverted. This makes the wall look double-sided and gives it its thickness. Before finishing, the shader will also check if any of these vertices are edge vertices via the vertex color attribute we used earlier. If it is an edge vertex the shader will generate flat geometry to cover the top edge which masks the hollow area between the two wall faces.

The last case is the most involved. In this case one or more of the three vertices has a damage value greater than 0 but below the threshold of "fully damaged". The position of the damaged vertex is transformed so the more damage a vertex has the more it will be pushed inward, both into the wall and toward it's neighbour vertices. This transform is applied to the original vertices as well as the inverted "inner wall" vertices. The resulting effect is a polygonal edge that shrinks and grows closer together as more damage is applied, making the wall geometry thinner and thinner until the damage value has reached the threshold and the geometry is fully discarded.

If a triangle is partially damaged like this then that also means it's on the leading edge of a damaged area, so a bridge of geometry is generated between the two sides of the wall. This serves a similar purpose to the top edge geometry in that it masks the hollow area inside the wall exposed by the damage.

Damaged vertices interpolate between the regular wall texture and a noisy damage effect texture depending on how damaged they are, to give the edge a messy mangled appearance. The normals for all the damaged, modified geometry are also recalculated when the vertices are transformed.

// Vertex attribute forwarded from vertex stage in vec4 aColor; // Threshold is a magic number const float kDamageThreshold = 0.025; const int kVertexCount = 3; int damagedVertexCount = 0; bool damageState[kVertexCount]; bool isEdge[kVertexCount]; float damageValue[kVertexCount]; void main() { // Get initial triangle info for(int i = 0; i < kVertexCount; i++) { // Keep count of how many vertices lie on // the edge of the mesh edgeCount += (aColor[i].z != 0) ? 1 : 0; // Sample damage value per vertex damageValue[i] = texture(uDamageBuffer, aColor[i].xy).r; // Check if the vertex is damaged, keeping // count of how many vertices are damaged damageState[i] = damageValue[i] > kDamageThreshold; damagedVertexCount += damageState[i] ? 1 : 0; } // Is this whole triangle destroyed? if (damagedVertexCount == kVertexCount) { // Discard triangle, don't emit any geometry return; } // Is this whole triangle undamaged? else if (damagedVertexCount == 0) { // Render flat triangle renderOriginalTriangle(false); // Render flipped triangle renderOriginalTriangle(true); // If two vertices from this triangle lie // on the edge of the mesh then we want // to generate an extruded edge to cover the gap if (edgeCount > 1) { renderEdgeTrim(); } return; } // // At this point we know the triangle must be partially damaged // // This function moves the vertices into their new // positions based on damageValue[] and re-calculates // normals from these new positions. transformDamagedVertices(); // Figure out where we need to generate a skirt to // cover up partially damaged edges if (damagedVertexCount == 1) { // Find which vertex is culled int culledIndex = (damageState[0] ? 0 : (damageState[1] ? 1 : 2)); // Generate two skirt edges adjacent to the // culled vertex renderSkirtEdge takes the // indices of the two input vertices for which we // want to draw the skirt if (culledIndex == 0) { renderSkirtEdge(1, 0); renderSkirtEdge(0, 2); } else if (culledIndex == 1) { renderSkirtEdge(1, 0); renderSkirtEdge(2, 1); } // (culledIndex == 2) else { renderSkirtEdge(0, 2); renderSkirtEdge(2, 1); } } // (damagedVertexCount == 2) else { // Build full skirt around all three edges // of damaged triangle renderSkirtEdge(1, 0); renderSkirtEdge(2, 1); renderSkirtEdge(0, 2); } // Render using tranformed vertex positions // from transformVertices() renderTransformedTriangle(false); // Render the same triangle flipped renderTransformedTriangle(true); }

This all demonstrates why the display mesh needed to be tessellated. The damage shader works on a per-vertex basis so the higher the density of the mesh, the more detailed the damage will appear.

Relative to other shaders used in the game the damage shader is less performant, so the fewer vertices it needs to process the better. The tesselation density ("faces-per-meter") used in the game was chosen because increasing the density did not result in a noticeable visual improvement. Just one of the advantages of making a game where the camera is pulled way back.

Figure 8. Damaged wall as it appears in game, wireframe overlay, and normals

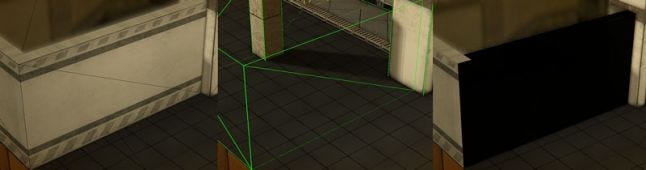

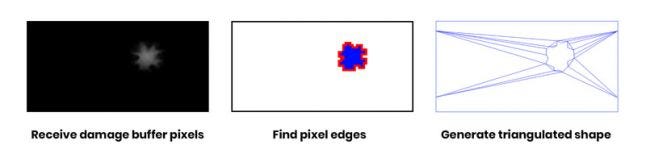

Any time a face receives damage the collision shape needs to be updated to match. This is done by extracting an outline of the affected area from the damage buffer and triangulating that outline into a new collision shape.

Figure 9. Drawing to and erasing the damage buffer using editor tools. The collision shape is visible in white.

To analyze the pixel data a copy of the damage buffer needs to be transferred from video memory to CPU-side memory. This can end up being a very slow operation which causes a stall in the render pipeline. To avoid this performance hit it's possible to trigger a request for a copy of the damage buffer as soon as the damage is applied, but the damage buffer data is not actually received until the next frame. This allows the graphics driver to defer the transfer operation until a time when it will have a lessened impact on performance. This means that the collision shape will have a one frame delay before it matches the shape of the damaged face, but this isn't noticeable even when running the game at low framerates. Without this technique there would be very distracting frame drops anytime a wall is damaged.

// Damage event is triggered, damage sprite is drawn to damage buffer. // ... // Read pixels from damageBuffer into pixelBufferObject. // Binding a PBO and calling glReadPixels with a null destination // allows the driver to transfer the data into the PBO asynchronously, // preventing a stall. glBindFramebuffer(GL_FRAMEBUFFER, damageBuffer); glBindBuffer(GL_PIXEL_PACK_BUFFER, pixelBufferObject); glReadPixels( 0, 0, bufferWidth, bufferHeight, GL_RGBA, GL_UNSIGNED_BYTE, nullptr); // Continue updating the rest of the frame // ... // Render, Swap // ... // -- New frame begins ---------------------- // Destructible wall entity is waiting for pixel data from the last // frame. When the wall entity gets updated it maps the pixel buffer // object and retrieves the pixel data. glBindBuffer(GL_PIXEL_PACK_BUFFER, pixelBufferObject); unsigned char* pixels = glMapBuffer(GL_PIXEL_PACK_BUFFER, GL_READ_ONLY); // Do work with pixels[] // ...

The damage buffer pixels now need to be analyzed to extract an outline of the damaged area. A marching squares algorithm is used to scan through the pixels and find the edges of any bright shapes indicating a damaged area. This involves a few magic threshold and epsilon values which have been tuned through trial and error to provide a reliable result. This results in a set of 2D shapes whose edges are derived from pixel coordinates. These shapes are then simplified to remove extraneous noise and detail.

Figure 10. Generating a new collision shape from a bitmap

All the required information has been gathered, now the new collision shape can be built. The simplified shapes are fed into a triangulation library which returns a set of triangles which match the contours of the input shapes. The original intact collision shape is discarded and a new more complex collision shape is built. This is made easier by the physics library which has a specific collision shape type that can be generated from a list of triangles.

Since they each reference the same source damage buffer, the gaps in the triangulated collision shape will match the gaps in the display mesh. Now you can walk through a wall!

Navigation for NPCs in Radio Viscera uses a node-based A* solver. Path node entities are placed throughout a level and then automatically linked together using visibility and accessibility checks from node to node. If an NPC needs to travel to a new location, it will run a path solver between the node nearest the destination and the node nearest to the NPCs current location.

Figure 11. Magenta lines are the navigation graph, the red line is the direct route to the NPCs destination and the green line is the planned route.

To navigate through a destroyed wall NPCs need to know if they can pass through at that location. The navigation network needs to be updated in response to damage events.

Figure 12. Top down view of the navigation graph after a damage event

When a damage event happens a search is done on the path node network to gather all nodes within a certain radius of the hit. From this set the two closest nodes on either side of the damageable face are selected and a visibility check is performed between them. If the two nodes have a clear line of sight to each other then that means the damaged area is large enough for a character to pass through. Connecting these two nodes directly sometimes results in oblique paths which are more likely to cause characters to get stuck. Instead, a new temporary path node is inserted into the graph on the ground directly beneath the damaged area to create a more orthogonal route. The two original nodes are bridged by the new temporary node and now NPCs can safely path find through the wall as if it had never been there. These nodes are labeled "temporary" because they're never saved and wiped anytime the level resets.

Figure 13. Crouching played at half speed to demonstrate the effect

Read more about:

Featured BlogsYou May Also Like